Python 官方文档:入门教程 => 点击学习

jstack:Java堆栈跟踪工具 jstack(Stack Trace for Java)命令用于生成虚拟机当前时刻的线程快照(一般称为threaddump或者javacore文件

jstack(Stack Trace for Java)命令用于生成虚拟机当前时刻的线程快照(一般称为threaddump或者javacore文件)。线程快照就是当前虚拟机内每一条线程正在执行的方法堆栈的集合,生成线程快照的主要目的是定位线程出现长时间停顿的原因,如线程间死锁、死循环、请求外部资源导致的长时间等待等都是导致线程长时间停顿的常见原因。线程出现停顿的时候通过jstack来查看各个线程的调用堆栈,就可以知道没有响应的线程到底在后台做什么事情,或者等待什么资源。jstack的官方文档地址为:https://docs.oracle.com/javase/8/docs/technotes/tools/unix/jstack.html。

jstack命令格式:jstack [option] vmid

jstack的主要option选项如下:

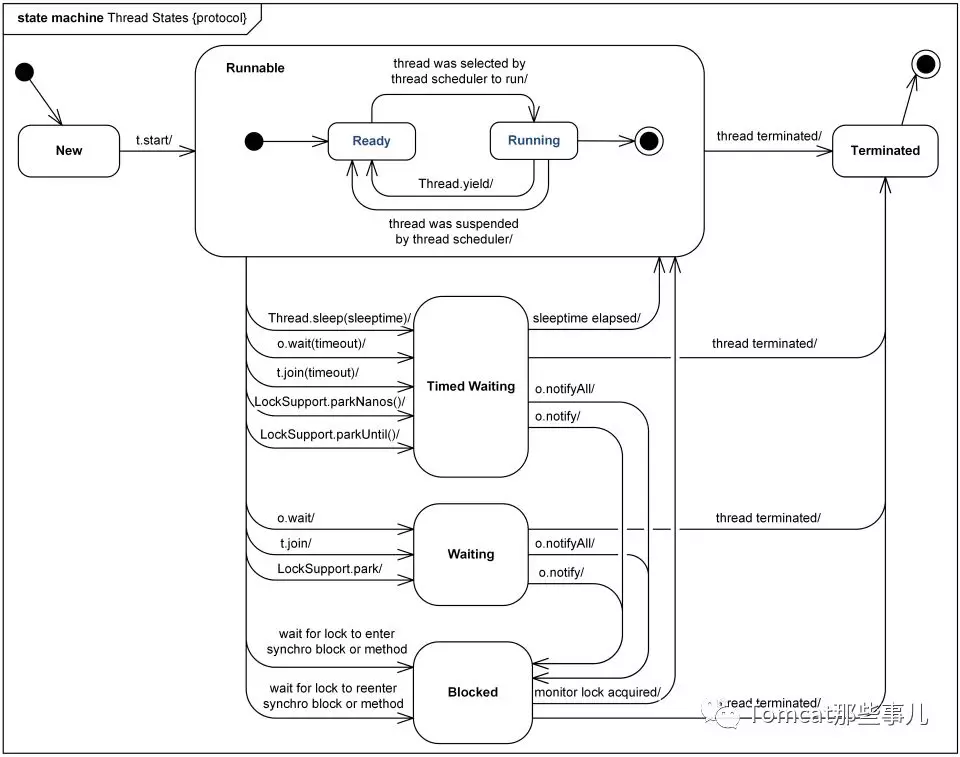

官网线程状态介绍:Https://docs.oracle.com/javase/8/docs/technotes/guides/troubleshoot/tooldescr034.html,如下所示

关于线程各个状态的转化,从网上找了一张图,画的挺详细的。

一般分析CPU飙高的步骤如下:

创建一个SpringBoot工程,controller程序如下,然后通过package -DMaven.test.skip=true打包之后放到linux上运行:

package com.wkp.jvm.chapter2;

import java.util.List;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.WEB.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RestController;

@RestController

public class CpuController {

Logger log=LoggerFactory.getLogger(CpuController.class);

@RequestMapping("/loop")

public List<Long> loop(){

log.info("演示死循环============");

while(true){

}

}

private Object lock1 = new Object();

private Object lock2 = new Object();

@RequestMapping("/deadlock")

public String deadlock() {

log.info("演示死锁============");

new Thread(()->{

synchronized(lock1) {

try {Thread.sleep(1000);} catch(Exception e) {}

synchronized(lock2){

System.out.println("Thread 1 over");

}

}

}).start();

new Thread(()->{

synchronized(lock2) {

try {Thread.sleep(1000);} catch(Exception e) {}

synchronized(lock1){

System.out.println("Thread 2 over");

}

}

}).start();

return "deadlock";

}

}通过http://ip:port/loop访问触发程序死循环,多访问几次之后,在Linux上通过top命令可以看到我们的Java应用程序CPU占用越来越高,系统负载也逐渐升高。

[root@wkp ~]# top

top - 18:13:31 up 321 days, 3:37, 4 users, load average: 13.14, 9.15, 4.72

Tasks: 128 total, 1 running, 108 sleeping, 19 stopped, 0 zombie

Cpu(s): 99.8%us, 0.2%sy, 0.0%ni, 0.0%id, 0.0%wa, 0.0%hi, 0.0%si, 0.0%st

Mem: 8193352k total, 7702892k used, 490460k free, 9204k buffers

Swap: 0k total, 0k used, 0k free, 75428k cached

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

26295 root 20 0 4524m 378m 13m S 184.8 4.7 13:51.51 java 通过top命令,我们观察到java进程26295占用CPU很高,系统负载也越来越高.然后我们通过top -Hp 26295查看进程的线程占用情况。

top -Hp pid 可以查看某个进程的线程信息,其中-H 显示线程信息,-p指定pid

[root@wkp~]# top -Hp 26295

top - 18:16:39 up 321 days, 3:40, 4 users, load average: 13.24, 11.14, 6.33

Tasks: 31 total, 12 running, 19 sleeping, 0 stopped, 0 zombie

Cpu(s): 99.8%us, 0.2%sy, 0.0%ni, 0.0%id, 0.0%wa, 0.0%hi, 0.0%si, 0.0%st

Mem: 8193352k total, 7673852k used, 519500k free, 9752k buffers

Swap: 0k total, 0k used, 0k free, 75508k cached

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND

26379 root 20 0 4524m 378m 13m R 15.6 4.7 2:36.42 java

26384 root 20 0 4524m 378m 13m R 15.6 4.7 1:41.46 java

26388 root 20 0 4524m 378m 13m R 15.6 4.7 1:10.34 java

5673 root 20 0 4524m 378m 13m R 15.6 4.7 1:07.09 java

26386 root 20 0 4524m 378m 13m R 15.3 4.7 1:17.68 java

26380 root 20 0 4524m 378m 13m R 15.0 4.7 2:18.31 java

26381 root 20 0 4524m 378m 13m R 15.0 4.7 2:05.06 java

26382 root 20 0 4524m 378m 13m R 15.0 4.7 1:45.69 java

26385 root 20 0 4524m 378m 13m R 15.0 4.7 1:20.29 java

26387 root 20 0 4524m 378m 13m R 15.0 4.7 1:13.59 java

5801 root 20 0 4524m 378m 13m R 15.0 4.7 0:51.41 java

26383 root 20 0 4524m 378m 13m R 14.6 4.7 1:55.11 java

26295 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.00 java

26305 root 20 0 4524m 378m 13m S 0.0 4.7 0:02.63 java

26306 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.21 java

26307 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.20 java

26311 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.36 java

26312 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.00 java

26314 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.01 java

26327 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.00 java

26328 root 20 0 4524m 378m 13m S 0.0 4.7 0:03.67 java

26329 root 20 0 4524m 378m 13m S 0.0 4.7 0:01.33 java

26330 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.00 java

26331 root 20 0 4524m 378m 13m S 0.0 4.7 0:01.79 java

26376 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.06 java

26377 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.00 java

26378 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.13 java

26389 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.13 java

26390 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.11 java

26391 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.00 java

26392 root 20 0 4524m 378m 13m S 0.0 4.7 0:00.16 java 然后我们执行 jstack 26295 > threadstack.txt 命令,将线程dump信息输出到txt文件中,然后下载下来。因为jstack打印出的线程堆栈中线程号是16进制的,所以我们从上面top -Hp命令打印出的线程中选取第一个26379,通过 printf "%x\n" 26379转为16进制得到670b。

[wkp@wkp4 Desktop]$ printf "%x\n" 26379

670b然后我们通过16进制的线程号670b,在threadstack.txt文件中搜索,可以搜到对应的线程(内容太多了,只贴了一小部分):可以看到 at com.wkp.jvm.chapter2.CpuController.loop(CpuController.java:21),第21行就对应我们的while(true)死循环,这样就找到了CPU飙高的原因了。

2019-09-24 18:17:55

Full thread dump Java HotSpot(TM) 64-Bit Server VM (25.162-b12 mixed mode):

//=========省略===============================================

"http-NIO-9999-exec-1" #16 daemon prio=5 os_prio=0 tid=0x00007fa06943c800 nid=0x670b runnable [0x00007fa01b3e9000]

java.lang.Thread.State: RUNNABLE

at com.wkp.jvm.chapter2.CpuController.loop(CpuController.java:21)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

//=========省略===============================================

"C1 CompilerThread1" #6 daemon prio=9 os_prio=0 tid=0x00007fa06813e000 nid=0x66d9 waiting on condition [0x0000000000000000]

java.lang.Thread.State: RUNNABLE

"C2 CompilerThread0" #5 daemon prio=9 os_prio=0 tid=0x00007fa06813b000 nid=0x66d8 waiting on condition [0x0000000000000000]

java.lang.Thread.State: RUNNABLE

"Finalizer" #3 daemon prio=8 os_prio=0 tid=0x00007fa068106800 nid=0x66ca in Object.wait() [0x00007fa054267000]

java.lang.Thread.State: WAITING (on object monitor)

at java.lang.Object.wait(Native Method)

- waiting on <0x0000000082ebc820> (a java.lang.ref.ReferenceQueue$Lock)

at java.lang.ref.ReferenceQueue.remove(ReferenceQueue.java:143)

- locked <0x0000000082ebc820> (a java.lang.ref.ReferenceQueue$Lock)

at java.lang.ref.ReferenceQueue.remove(ReferenceQueue.java:164)

at java.lang.ref.Finalizer$FinalizerThread.run(Finalizer.java:212)

"VM Thread" os_prio=0 tid=0x00007fa0680fa000 nid=0x66c7 runnable

"GC task thread#0 (ParallelGC)" os_prio=0 tid=0x00007fa06801e000 nid=0x66c2 runnable

"GC task thread#1 (ParallelGC)" os_prio=0 tid=0x00007fa068020000 nid=0x66c3 runnable

"VM Periodic Task Thread" os_prio=0 tid=0x00007fa068146000 nid=0x66db waiting on condition

JNI global references: 913下面我们对线程dump日志的格式做个介绍,以 "http-nio-9999-exec-1" #16 daemon prio=5 os_prio=0 tid=0x00007fa06943c800 nid=0x670b runnable [0x00007fa01b3e9000]为例:

其他的线程名含义:

我们演示一下死锁问题的查找:同样是启动上面的示例springboot程序,然后访问 http://ip:port/deadlock触发程序死锁。然后通过jstack导出线程dump文件,在文件末尾可以看到如下内容:

Found one Java-level deadlock:

=============================

"Thread-5":

waiting to lock monitor 0x00007fc978003778 (object 0x00000000833D0c20, a java.lang.Object),

which is held by "Thread-4"

"Thread-4":

waiting to lock monitor 0x00007fc978003568 (object 0x00000000833d0c30, a java.lang.Object),

which is held by "Thread-5"

Java stack infORMation for the threads listed above:

===================================================

"Thread-5":

at com.wkp.jvm.chapter2.CpuController.lambda$deadlock$1(CpuController.java:46)

- waiting to lock <0x00000000833d0c20> (a java.lang.Object)

- locked <0x00000000833d0c30> (a java.lang.Object)

at com.wkp.jvm.chapter2.CpuController$$Lambda$343/408916933.run(Unknown Source)

at java.lang.Thread.run(Thread.java:748)

"Thread-4":

at com.wkp.jvm.chapter2.CpuController.lambda$deadlock$0(CpuController.java:38)

- waiting to lock <0x00000000833d0c30> (a java.lang.Object)

- locked <0x00000000833d0c20> (a java.lang.Object)

at com.wkp.jvm.chapter2.CpuController$$Lambda$342/620117450.run(Unknown Source)

at java.lang.Thread.run(Thread.java:748)

Found 1 deadlock.可以看到线程dump中已经把出现死锁的线程打印出来了,Thread-4和Thread-5互相等待对方持有的锁。

其实关于线程的dump和死锁检查等,jdk提供的有可视化的工具,比如 jconsole和jvisualvm 也是可以做到的。

另外,我们还可以通过如下 Thread.getAllStackTraces() 打印线程信息

@RequestMapping("/getAllThreadStack")

public void getAllThreadStack() {

for (Entry<Thread, StackTraceElement[]> stackTrace : Thread

.getAllStackTraces().entrySet()) {

Thread thread = (Thread) stackTrace.geTKEy();

StackTraceElement[] stack = (StackTraceElement[]) stackTrace

.getValue();

if (thread.equals(Thread.currentThread())) {

continue;

}

System.out.println("线程:" + thread.getName());

for (StackTraceElement stackTraceElement : stack) {

System.out.println(stackTraceElement);

}

}

}到此这篇关于jstack配合top命令分析CPU飙高、程序死锁问题的文章就介绍到这了,更多相关Java 程序死锁内容请搜索编程网以前的文章或继续浏览下面的相关文章希望大家以后多多支持编程网!

--结束END--

本文标题: jstack配合top命令分析CPU飙高、程序死锁问题

本文链接: https://lsjlt.com/news/136554.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

2024-03-01

2024-03-01

2024-03-01

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0