Python 官方文档:入门教程 => 点击学习

目录LSTM简介1、RNN的梯度消失问题2、LSTM的结构Tensorflow中LSTM的相关函数tf.contrib.rnn.BasicLSTMCelltf.nn.dynamic_

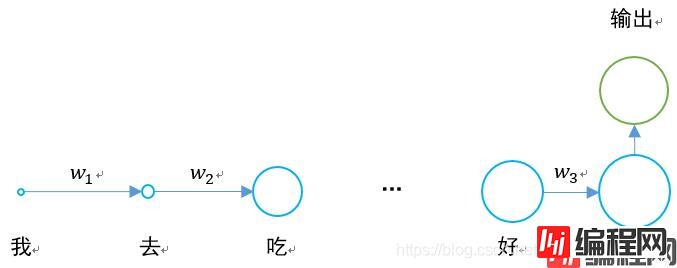

在过去的时间里我们学习了RNN循环神经网络,其结构示意图是这样的:

其存在的最大问题是,当w1、w2、w3这些值小于0时,如果一句话够长,那么其在神经网络进行反向传播与前向传播时,存在梯度消失的问题。

0.925=0.07,如果一句话有20到30个字,那么第一个字的隐含层输出传递到最后,将会变为原来的0.07倍,相比于最后一个字的影响,大大降低。

其具体情况是这样的:

长短时记忆网络就是为了解决梯度消失的问题出现的。

原始RNN的隐藏层只有一个状态h,从头传递到尾,它对于短期的输入非常敏感。

如果我们再增加一个状态c,让它来保存长期的状态,问题就可以解决了。

对于RNN和LSTM而言,其两个step单元的对比如下。

我们把LSTM的结构按照时间维度展开:

我们可以看出,在n时刻,LSTM的输入有三个:

1、当前时刻网络的输入值;

2、上一时刻LSTM的输出值;

3、上一时刻的单元状态。

LSTM的输出有两个:

1、当前时刻LSTM输出值;

2、当前时刻的单元状态。

3、LSTM独特的门结构

LSTM用两个门来控制单元状态cn的内容:

1、遗忘门(forget gate),它决定了上一时刻的单元状态cn-1有多少保留到当前时刻;

2、输入门(input gate),它决定了当前时刻网络的输入c’n有多少保存到单元状态。

LSTM用一个门来控制当前输出值hn的内容:

输出门(output gate),它决定了当前时刻单元状态cn有多少输出。

tf.contrib.rnn.BasicLSTMCell(

num_units,

forget_bias=1.0,

state_is_tuple=True,

activation=None,

reuse=None,

name=None,

dtype=None

)

在使用时,可以定义为:

lstm_cell = tf.contrib.rnn.BasicLSTMCell(self.cell_size, forget_bias=1.0, state_is_tuple=True)

在定义完成后,可以进行状态初始化:

self.cell_init_state = lstm_cell.zero_state(self.batch_size, dtype=tf.float32)

tf.nn.dynamic_rnn(

cell,

inputs,

sequence_length=None,

initial_state=None,

dtype=None,

parallel_iterations=None,

swap_memory=False,

time_major=False,

scope=None

)

在LSTM的最后,需要用该函数得出结果。

self.cell_outputs, self.cell_final_state = tf.nn.dynamic_rnn(

lstm_cell, self.l_in_y, initial_state=self.cell_init_state, time_major=False)

返回的是一个元组 (outputs, state):

outputs:LSTM的最后一层的输出,是一个tensor。如果为time_major== False,则它的shape为[batch_size,max_time,cell.output_size]。如果为time_major== True,则它的shape为[max_time,batch_size,cell.output_size]。

states:states是一个tensor。state是最终的状态,也就是序列中最后一个cell输出的状态。一般情况下states的形状为 [batch_size, cell.output_size],但当输入的cell为BasicLSTMCell时,states的形状为[2,batch_size, cell.output_size ],其中2也对应着LSTM中的cell state和hidden state。

整个LSTM的定义过程为:

def add_input_layer(self,):

#X最开始的形状为(256 batch,28 steps,28 inputs)

#转化为(256 batch*28 steps,128 hidden)

l_in_x = tf.reshape(self.xs, [-1, self.input_size], name='to_2D')

#获取Ws和Bs

Ws_in = self._weight_variable([self.input_size, self.cell_size])

bs_in = self._bias_variable([self.cell_size])

#转化为(256 batch*28 steps,256 hidden)

with tf.name_scope('Wx_plus_b'):

l_in_y = tf.matmul(l_in_x, Ws_in) + bs_in

# (batch * n_steps, cell_size) ==> (batch, n_steps, cell_size)

# (256*28,256)->(256,28,256)

self.l_in_y = tf.reshape(l_in_y, [-1, self.n_steps, self.cell_size], name='to_3D')

def add_cell(self):

#神经元个数

lstm_cell = tf.contrib.rnn.BasicLSTMCell(self.cell_size, forget_bias=1.0, state_is_tuple=True)

#每一次传入的batch的大小

with tf.name_scope('initial_state'):

self.cell_init_state = lstm_cell.zero_state(self.batch_size, dtype=tf.float32)

#不是主列

self.cell_outputs, self.cell_final_state = tf.nn.dynamic_rnn(

lstm_cell, self.l_in_y, initial_state=self.cell_init_state, time_major=False)

def add_output_layer(self):

#设置Ws,Bs

Ws_out = self._weight_variable([self.cell_size, self.output_size])

bs_out = self._bias_variable([self.output_size])

# shape = (batch,output_size)

# (256,10)

with tf.name_scope('Wx_plus_b'):

self.pred = tf.matmul(self.cell_final_state[-1], Ws_out) + bs_out

该例子为手写体识别例子,将手写体的28行分别作为每一个step的输入,输入维度均为28列。

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import numpy as np

mnist = input_data.read_data_sets("MNIST_data",one_hot = "true")

BATCH_SIZE = 256 # 每一个batch的数据数量

TIME_STEPS = 28 # 图像共28行,分为28个step进行传输

INPUT_SIZE = 28 # 图像共28列

OUTPUT_SIZE = 10 # 共10个输出

CELL_SIZE = 256 # RNN 的 hidden unit size,隐含层神经元的个数

LR = 1e-3 # learning rate,学习率

def get_batch(): #获取训练的batch

batch_xs,batch_ys = mnist.train.next_batch(BATCH_SIZE)

batch_xs = batch_xs.reshape([BATCH_SIZE,TIME_STEPS,INPUT_SIZE])

return [batch_xs,batch_ys]

class LSTMRNN(object): #构建LSTM的类

def __init__(self, n_steps, input_size, output_size, cell_size, batch_size):

self.n_steps = n_steps

self.input_size = input_size

self.output_size = output_size

self.cell_size = cell_size

self.batch_size = batch_size

#输入输出

with tf.name_scope('inputs'):

self.xs = tf.placeholder(tf.float32, [None, n_steps, input_size], name='xs')

self.ys = tf.placeholder(tf.float32, [None, output_size], name='ys')

#直接加层

with tf.variable_scope('in_hidden'):

self.add_input_layer()

#增加LSTM的cell

with tf.variable_scope('LSTM_cell'):

self.add_cell()

#直接加层

with tf.variable_scope('out_hidden'):

self.add_output_layer()

#计算损失值

with tf.name_scope('cost'):

self.compute_cost()

#训练

with tf.name_scope('train'):

self.train_op = tf.train.AdamOptimizer(LR).minimize(self.cost)

#正确率计算

self.correct_pre = tf.equal(tf.argmax(self.ys,1),tf.argmax(self.pred,1))

self.accuracy = tf.reduce_mean(tf.cast(self.correct_pre,tf.float32))

def add_input_layer(self,):

#X最开始的形状为(256 batch,28 steps,28 inputs)

#转化为(256 batch*28 steps,128 hidden)

l_in_x = tf.reshape(self.xs, [-1, self.input_size], name='to_2D')

#获取Ws和Bs

Ws_in = self._weight_variable([self.input_size, self.cell_size])

bs_in = self._bias_variable([self.cell_size])

#转化为(256 batch*28 steps,256 hidden)

with tf.name_scope('Wx_plus_b'):

l_in_y = tf.matmul(l_in_x, Ws_in) + bs_in

# (batch * n_steps, cell_size) ==> (batch, n_steps, cell_size)

# (256*28,256)->(256,28,256)

self.l_in_y = tf.reshape(l_in_y, [-1, self.n_steps, self.cell_size], name='to_3D')

def add_cell(self):

#神经元个数

lstm_cell = tf.contrib.rnn.BasicLSTMCell(self.cell_size, forget_bias=1.0, state_is_tuple=True)

#每一次传入的batch的大小

with tf.name_scope('initial_state'):

self.cell_init_state = lstm_cell.zero_state(self.batch_size, dtype=tf.float32)

#不是主列

self.cell_outputs, self.cell_final_state = tf.nn.dynamic_rnn(

lstm_cell, self.l_in_y, initial_state=self.cell_init_state, time_major=False)

def add_output_layer(self):

#设置Ws,Bs

Ws_out = self._weight_variable([self.cell_size, self.output_size])

bs_out = self._bias_variable([self.output_size])

# shape = (batch,output_size)

# (256,10)

with tf.name_scope('Wx_plus_b'):

self.pred = tf.matmul(self.cell_final_state[-1], Ws_out) + bs_out

def compute_cost(self):

self.cost = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(logits = self.pred,labels = self.ys)

)

def _weight_variable(self, shape, name='weights'):

initializer = np.random.nORMal(0.0,1.0 ,size=shape)

return tf.Variable(initializer, name=name,dtype = tf.float32)

def _bias_variable(self, shape, name='biases'):

initializer = np.ones(shape=shape)*0.1

return tf.Variable(initializer, name=name,dtype = tf.float32)

if __name__ == '__main__':

#搭建 LSTMRNN 模型

model = LSTMRNN(TIME_STEPS, INPUT_SIZE, OUTPUT_SIZE, CELL_SIZE, BATCH_SIZE)

sess = tf.Session()

sess.run(tf.global_variables_initializer())

#训练10000次

for i in range(10000):

xs, ys = get_batch() #提取 batch data

if i == 0:

#初始化data

feed_dict = {

model.xs: xs,

model.ys: ys,

}

else:

feed_dict = {

model.xs: xs,

model.ys: ys,

model.cell_init_state: state #保持 state 的连续性

}

#训练

_, cost, state, pred = sess.run(

[model.train_op, model.cost, model.cell_final_state, model.pred],

feed_dict=feed_dict)

#打印精确度结果

if i % 20 == 0:

print(sess.run(model.accuracy,feed_dict = {

model.xs: xs,

model.ys: ys,

model.cell_init_state: state #保持 state 的连续性

}))

以上就是python神经网络使用tensorflow构建长短时记忆LSTM的详细内容,更多关于tensorflow长短时记忆网络LSTM的资料请关注编程网其它相关文章!

--结束END--

本文标题: python神经网络使用tensorflow构建长短时记忆LSTM

本文链接: https://lsjlt.com/news/117645.html(转载时请注明来源链接)

有问题或投稿请发送至: 邮箱/279061341@qq.com QQ/279061341

2024-03-01

2024-03-01

2024-03-01

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

2024-02-29

回答

回答

回答

回答

回答

回答

回答

回答

回答

回答

0